Posts tagged “lisa”

-

LISA 2016 - Day 0/1/2

LISA again! This is the fifth? (Washington, Baltimore, San Diego, San Jose, Seattle, and now Boston) sixth! that I've been to. Saturday's flight in was fairly uneventful, except a) it didn't bother my sciatica too much, so yey and b) I forgot my coat on the plane, and it doesn't look like Air Canada has a working system to take "Hey, did you see a coat?" calls.

Fortunately I can count on the kindness of Andy Seely, who brought an extra coat and loaned it to me. For his kindness I have given him a "Taggart Transcontinental" t-shirt, and let him buy me supper. I'm nothing if not generous.

Sunday I spent the entire day at the Google SRE tutorial, which was very, very cool; a big part of it was an exercise to architect a system that would read and join logfiles. It took a long time to wrap my head around how everyone was thinking about this, but writing down the moving parts made it all a lot clearer. In the end, my team's proposal approximated the final example config presented by Google, so that was good. Final sol'n, BTW, used 101 machines. The math all worked out, but it still made my jaw drop. When I asked the presenters about this, they grinned. "We've forgotten how to count small," one of them said.

Today was spent in "Everything you ever wanted to know about operating systems but were afraid to ask", aka "Caskey's Brain Dump". It was a pretty awesome talk, covering everything from silicon through filesystems. Well worth it; I'd love a recording of it, since the slides simply don't do it justice.

-

CAN I ROCK BUSINESS WITH YOU?

Today's title from the subject line of some spam I just got. ("a spam"? "a spammy email"? just "spam"?)

Mystery flu-like illness continues, or at least its fallout; I've had lower back pain for the last ~ 4 weeks. Doctor says removing spine is "not an option" but I've done some Googling and

$WORK continues apace. After taking a week of Python training, we're using Go for a new tool we're building. Haven't got a good sense for what it's like just yet, but so far I don't seem to be making a mess of things.

Tried out drone.io at $WORK yesterday and holy god, is it good. Auth with our internal Github, then activate repos, and boom! it runs tests on every new commit on any branch, watches for PRs, the whole nine yards. When I think of the amount of work we had to do to get Jenkins to do this, it's insane. Plus the whole run-as-a-Docker-container, fire-up-sibling-docker-containers-for-tests thing is very, very impressive.

Sportsball has started up again with a vengeance: practices on Monday and Wednesday, games on Fridays and Saturdays. Somebody stop this merry-go-round!

I've registered for LISA 16, woot! This will be my fifth -- wait, sixth? -- LISA, ten years after my first time attending. Not sure who's gonna be the theme band this year -- I've done New Pornographers, Josh Rouse, Soul Coughing and Sloan. And since he's co-chair this year, it seems like a good time to pull out that picture of Matt Simmons (@standaloneSA) as a PHP dev:

-

LISA14 pico-bloggity

This year I'm blogging for the USENIX blog, so we'll see how much I actually put up here...but the thought of going w/o updating my own just makes me sad, so here we go.

Took the bus down, which was completely uneventful and pleasant. Walked from King St station to the conference hotel, which was a bit of a hike but welcome exercise. I'm on the 25th floor and have a pretty skookum view of local neon and such. Got supper and some groceries, then went out for drinks w/Matt and his wife Amy, Pat Cable (who I'm meeting in person now for the first time), Bob and Alf, and Ken Schumacher. Good times, with lots of good teasings of Matt in as well. Missing Ben Cotton, which is a shame; the two of us could pretty much get Matt to cry if we tried hard enough.

First tutorial this AM was "Stats for Ops", and it was amazing. Discovered that using a spreadsheet is a really good skill to have. I have to learn that at some point...

And now off for next tutorial.

-

Is that what happened?

So my latest blog post for LISA just got posted -- and that's the last long(ish) one; next week BeerOps, Mark Lamourine and I will be posting daily updates as we're there. Also, I've volunteered to help Julie Miller, the Marketing Communications Manager for USENIX, with the opening orientation on Saturday night. I seem to remember taking that the first year I went, though I don't seem to have written it down...

By the way, shouts out to BeerOps, Mark Lamourine, Matt Simmons and Noah Meyerhans for all the help during LISA Bloggity Sprint 2014. There are beers/chocolate/what-you-owed in plentitude.

On another note: I'm auditioning a Chromebook, an Acer C720, to see how it works out. Right now I'm using Debian Jessie (testing) via Crouton, which lets you install Linux to a chroot within Chrome. So far: the keyboard is smaller than I'm used to, and the Canadian keyboard in particular is annoying -- they've crammed in tons of extra keys and split the Enter and Shift keys to do so. But overall it's okay; I can run tests for Yogurty in 3 seconds (cf. 12 on my old P3 laptop/server), and even Stellarium seems to run just fine. I've got a refurbished 4GB model on order w/Walmart in the states, and I can pick that up while I'm at LISA. So, you know, looking good.

Bridget Kromhout's latest post, The First Rule of DevOps Club, is awesome. Quote:

But when the open space opening the next day had an anecdote featuring "ops guys", I'd had enough. I went up, took the mic, and told the audience of several hundred people (of whom perhaps 98% were guys) how erased I feel when I hear that.

I said what I always think (and sometimes say) when this comes up. If you are a guy, and you like to date women, would you place a personal ad that says this? "I'd like to meet a wonderful guy to fall in love and spend my life with. This guy must like long walks on the beach and holding hands, and must also be female." If that sounds ludicrous to you, then you don't actually think "guy" is gender-neutral.

That's a small part of a much longer post; go read the rest.

Much at $WORK; I've got a new team mate from Belgium who's awesome, I'm starting to find a sense of rhythm, and organizing time is as challenging as ever. There are lots, LOTS of fun things to do, and it's damn hard sometimes to say "I'm just gonna put that on the TODO list and walk away."

This week my youngest son has switched from "The Wizard of Oz" to "Treasure Island" for story time. He got bored of TWOO and we didn't finish it; I'm curious to see how long he'll stick with TI. Still so much fun to read to them both.

-

To the beat of the rhythm of the night

Busy, yo:

This was my first week on call at $WORK, and naturally a few things came up -- nothing really huge, but enough that the rhythm I'd been slowly developing (and coming to relish) was pretty much lost. And then Friday night/Saturday morning I was paged three times (11pm, 1am and 5.30am) -- mostly minor things, but enough that I was pretty much a wreck yesterday. I'm coming to dread the sad trombone.

Besides that, I've also been blogging about the LISA14 conferencefor USENIX, along with Katherine Daniels (@beerops) and Mark Lamourine (@markllama). They've got some excellent articles up; Mark wrote about LISA workshops, and Katherine described why she's going to LISA. Awesome stuff and worth your time.

I managed to brew last week for the first time since thrice-blessed February; it's a saison (yeast, wheat malt, acidulated malt) with a crapton of homegrown hops (roughly a pound). I'm looking forward to this one.

Going to San Francisco again week after next for $WORK. (Prospective busyness.)

Kids are back to school! Youngest is in grade 1 and oldest in grade 3. Wow.

-

Wha' happened?

Got SSL set up for both my web and email servers; created pull request for Duraconf in the process.

Traded in a crapton of telescope eyepieces for a couple nice upgrades: 2" 31mm Antares modified Erfle (74 deg FOV), and 1.25" 17mm Antares Speers-Waler (82 deg FOV). I also got a 2" diagonal and connector for the Meade; the Dob had a 2" focuser already. I kept my 12mm Vixen (50 deg FOV) and a random 7.5mm Plossl. All this was done at Vancouver Telescope, who are an incredibly awesome bunch of people.

Ordered flocking paper for the Meade (I think the focuser tube needs it) and the Peterson EZ focus kit (satisfied customers).

Went with the family to the PNE. Pics to come!

Visited brother and his wife in Kelowna with my parents.

First LISA blog post up at the USENIX blog. (Gotta write more about that too...)

-

Trying to make things easier

First day back at $WORK after the winter break yesterday, and some...interesting...things. Like finding out about the service that didn't come back after a power outage three weeks ago. Fuck. Add the check to Nagios, bring it up; when the light turns green, the trap is clean.

Or when I got a page about a service that I recognized as having, somehow, to do with a webapp we monitor, but no real recollection of what it does or why it's important. Go talk to my boss, find out he's restarted it and it'll be up in a minute, get the 25-word version of what it does, add him to the contact list for that service and add the info to documentation.

I start to think about how to include a link to documentation in Nagios alerts, and a quick search turns up "Default monitoring alerts are awful" , a blog post by Jeff Goldschrafe about just this. His approach looks damned cool, and I'm hoping he'll share how he does this. Inna meantime, there's the Nagios config options "notes", "notesurl" and "actionurl", which I didn't know about. I'll start adding stuff to the Nagios config. (Which really makes me wish I had a way of generating Nagios config...sigh. Maybe NConf?)

But also on Jeff's blog I found a post about Kaboli, which lets you interact with Nagios/Icinga through email. That's cool. Repo here.

Planning. I want to do something better with planning. I've got RT to catch problems as they emerge, and track them to completion. Combined with orgmode, it's pretty good at giving me a handy reference for what I'm working on (RT #666) and having the whole history available. What it's not good at is big-picture planning...everything is just a big list of stuff to do, not sorted by priority or labelled by project, and it's a big intimidating mess. I heard about Kanban when I was at LISA this year, and I want to give it a try...not suure if it's exactly right, but it seems close.

And then I came across Behaviour-driven infrastructure through Cucumber, a blog post from Lindsay Holmwood. Which is damn cool, and about which I'll write more another time. Which led to the Github repo for a cucumber/nagios plugin, and reading more about Cucumber, and behaviour-driven development versus test-driven development (hint: they're almost exactly the same thing).

My god, it's full of stars.

-

And then there are the resolutions

I'm still digesting all the stuff that came out of LISA this year. But there are a number of things I want to try out:

I learned a little bit about agile development, mainly from Geoff Halprin's training material and keynote, and it seemed interesting. One of the things that resonated with me was the idea of only having a small number of work stages for stuff: in the queue, next, working, and done. (Going without the info here, so quite possibly wrong.) I like that: work on stuff in two-week chunks, commit to that and get it done. That seems much more manageable than having stuff in the queue with no real idea of a schedule. And a two-week chunk is at least a good place to start: interruptions aren't about to go away any time soon, and I can adjust this as necessary.

A corollary is that it's probably not best to plan more than two such things in a month. I'm thinking about things like switching from Nagios to Icinga, setting up Ganeti, and such: more than I can do in an hour, less than a semester's work.

I really want to work on eliminating pain points this year. Icinga's one; Nagios' web interface is painful. (I'd also like to look at Sensu.) I want to make backups better. I want to add proper testing for Cfengine with Vagrant and Git, so I can go on more than a wing and a prayer when pushing changes.

I also need to work more closely with the faculty in my department. Part of that is committing to more manageable work, and part of that is just following through more. Part of it, though, is working with people that intimidate me, and letting them know what I can do for them.

I need to manage my time better, and I think a big part of that is interruptions. I've just been told I'm getting an office, which is a mixed blessing. There's a certain amount of flux in the office, and I've been making friends with the people around me lately. I'll miss them/that, but I think the ability to retreat and work on something is going to be valuable.

Another part of managing time is, I think/hope, a better routine. Like: one hour every day for long-term project work. (The office makes this easier to imagine.) Set times for the things I want to get done at home (where my free time comes in one-hour chunks). Deciding if I want to work on transit (I can take my laptop home with me, and it's a 90 minute commute), and how (fun projects? stuff I can't get done at work? blue-sky stuff?). If, because a. my eyes will bug out if I stare at a screen all day and b. I firmly intend to keep a limit on my work time. So it'd probably be a couple days a week, to allow time for all the podcasts and books I want to inhale.

Microboxing for productivity. Interesting stuff.

Kanban. Related to Agile, but I forgot about it. Pomodoro + Emacs + Orgmode, too.

Probably more as I think of it...but right now it's time to sleep. 5.30am comes awful early after 11 days off...

-

LISA12 Miscellany

A collection of stuff that didn't fit anywhere else:

St Vidicon of Cathode. Only slightly spoiled by the disclaimer "Saint Vidicon and his story are the intellectual property of Christopher Stasheff."

A Vagrant box for OmniOS, the OpenSolaris distro I heard about at LISA.

A picture of Matt Simmons. When I took this, he was committing some PHP code and mumbling something like "All I gotta do is enable globals and everything'll be fine..."

- I finished the scavenger hunt and won the USENIX Dart of Truth:

-

Theologians

Where I'm going, you cannot come... "Theologians", WilcoAt 2.45am, I woke up because a) my phone was buzzing with a page from work, and b) the room was shaking. I was quite bagged, since I'd been up 'til 1 finishing yesterday's blog entry, and all I could think was "Huh...earthquake. How did Nagios know about this?" Since the building didn't seem to be falling, I went back to sleep. In the morning, I found out it was a magnitude 6.2 earthquake.

I was going to go to the presentation by the CBC on "What your CDN won't tell you" (initially read as "What your Canadian won't tell you": "Goddammit, it's prounced BOOT") but changed my mind at the last minute and went to the Cf3 "Guru is in" session with Diego Zamboni. (But not before accidentally going to the Cf3 tutorial room; I made an Indiana Jones-like escape as Mark Burgess was closing the door.) I'm glad I went; I got to ask what people are doing for testing, and got a some good hints.

Vagrant's good for testing (and also awesome in general). I'm trying to get a good routine set up for this, but I have not started using the Cf3 provider for Vagrant...because of crack? Not sure.

You might want to use different directories in your revision control; that makes it easy to designate dev, testing, and production machines (don't have to worry about getting different branches; just point them at the directories in your repo).

Make sure you can promote different branches in an automated way (merging branches, whatever). It's easy to screw this up, and it's worth taking the time to make it very, very easy to do it right.

If you've got a bundle meant to fix a problem, deliberately break a machine to make sure it actually does fix the problem.

Consider using git + gerrit + jenkins to test and review code.

The Cf3 sketch tool still looks neat. The Enterprise version looked cool, too; it was the first time I'd seen it demonstrated, and I was intrigued.

At the break I got drugs^Wcold medication from Jennifer. Then I sang to Matt:

(and the sailors say) MAAAA-AAAT you're a FIIINNE girl what a GOOOD WAAAF you would be but my life, my love and my LAY-ee-daaaay is the sea (DOOOO doo doo DOO DOO doot doooooooo)

I believe Ben has video; I'll see if it shows up.

BTW, Matt made me sing "Brandy" to him when I took this picture:

I discussed Yo Dawg Compliance with Ben ("Yo Dawg, I put an X in your Y so you could X when you Y"; == self-reference), and we decided to race each other to @YoDawgCompliance on Twitter. (Haha, I got @YoDawgCompliance2K. Suck it!)

(Incidentally, looking for a fully-YoDawg compliant ITIL implementation? Leverage @YoDawgCompliance2K thought leadership TODAY!)

Next up was the talk on the Greenfield HPC by @arksecond. I didn't know the term, and earlier in the week I'd pestered him for an explanation. Explanation follows: Greenfield is a term from the construction industry, and denotes a site devoid of any existing infrastructure, buildings, etc where one might do anything; Brownfield means a site where there is existing buildings, etc and you have to take those into account. Explanation ends. Back to the talk. Which was interesting.

They're budgeting 25 kW/rack, twice what we do. For cooling they use spot cooling, but they also were able to quickly prototype aisle containment with duct tape and cardboard. I laughed, but that's awesome: quick and easy, and it lets you play around and get it right. (The cardboard was replaced with plexiglass.)

Lunch was with Matt and Ken from FOO National Labs, then Sysad1138 and Scott. Regression was done, fun was had and phones were stolen.

The plenary! Geoff Halprin spoke about how DevOps has been done for a long time, isn't new and doesn't fix everything. Q from the audience: I work at MIT, and we turn out PhDs, not code; what of this applies to me? A: In one sense, not much; this is not as relevant to HPC, edu, etc; not everything looks like enterprise setups. But look at the techniques, underlying philosophy, etc and see what can be taken.

That's my summary, and the emphasis is prob. something he'd disagree with. But it's Friday as I write this and I am tired as I sit in the airport, bone tired and I want to be home. There are other summaries out there, but this one is mine.

-

Flair

Silly simple lies They made a human being out of you... "Flair", Josh RouseThursday I gave my Lightning Talk. I prepared for it by writing it out, then rehearsing a couple times in my room to get it down to five minutes. I think it helped, since I got in about two seconds under the wire. I think I did okay; I'll post it separately. Pic c/o Bob the Viking:

Some other interesting talks:

@perlstalker on his experience with Ceph (he's happy);

@chrisstpierre on why XML is good for (it's code with a built-in validator; don't use it for setting syslog levels);

the guy who wanted to use retired aircraft carriers as floating data centres;

Dustin on MozPool (think cloud for Panda Boards);

Stew (@digitalcrow) on Machination, his homegrown hierarchical config management tool (users can set their preferences; if needed for the rest of their group, it can be promoted up the hierarchy as needed);

Derek Balling on megacity.org/timeline (keep your fingers crossed!);

a Google dev on his experience bringing down GMail.

Afterward I went to the vendor booths again, and tried the RackSpace challenge: here's a VM and it's root password; it needs to do X, Y and Z. GO. I was told my time wasn't bad (8.5 mins; wasn't actually too hard), and I may actually win something. Had lunch with John again and discussed academia, fads in theoretical computer science and the like.

The afternoon talk on OmniOS was interesting; it's an Illumos version/distro with a rigourous update schedule. The presenter's company uses it in a LOT of machines, and their customers expect THEM to fix any problems/security problems...not say "Yeah, the vendor's patch is coming in a couple weeks." Stripped down; they only include about 110 packages (JEOS: "Just Enough Operating System") in the default install. "Holy wars" slide: they use IPS ("because ALL package managers suck") and vi (holler from audience: "Which one?"). They wrote their own installer: "If you've worked with OpenSolaris before, you know that it's actually pretty easy getting it to work versus fucking getting it on the disk in the first place."

At the break I met with Nick Anderson (@cmdln_) and Diego Zamboni (@zzamboni, author of "Learning Cfengine 3"). Very cool to meet them both, particularly as they did not knee me in the groin for my impertinence in criticising of Cf3 syntax. Very, very nice and generous folk.

The next talk, "NSA on the Cheap", was one I'd already heard from the USENIX conference in the summer (downloaded the MP3), so I ended up talking to Chris Allison. I met him in Baltimore on the last day, and it turns out he's Matt's coworker (and both work for David Blank-Edelman). And when he found out that Victor was there (we'd all gone out on our last night in Baltimore) he came along to meet him. We all met up, along with Victor's wife Jennifer, and caught up even more. (Sorry, I'm writing this on Friday; quality of writing taking a nosedive.)

And so but Victor, Jennifer and I went out to Banker's Hill, a restaurant close to the hotel. Very nice chipotle bacon meatloaf, some excellent beer, and great conversation and company. Retired back to the hotel and we both attended the .EDU BoF. Cool story: someone who's unable to put a firewall on his network (he's in a department, not central IT, so not an option for him) woke up one day to find his printer not only hacked, but the firmware running a proxy of PubMed to China ("Why is the data light blinking so much?"). Not only that, but he couldn't upgrade the firmware because the firmware reloading code had been overwritten.

Q: How do you know you're dealing with a Scary Viking Sysadmin?

A: Service monitoring is done via two ravens named Huginn and Muninn.

-

The White Trash Period Of My Life

Careful with words -- they are so meaningful Yet they scatter like the booze from our breath... "The White Trash Period Of My Life", Josh RouseI woke up at a reasonable time and went down to the lobby for free wireless; finished up yesterday's entry (2400 words!), posted and ate breakfast with Andy, Alf ("I went back to the Instagram hat store yesterday and bought the fedora. But now I want to accessorize it") and...Bob in full Viking drag.

Andy: "Now you...you look like a major in the Norwegian army."

Off to the Powershell tutorial. I've been telling people since that I like two things from Microsoft: the Natural Keyboard, and now Powershell. There are some very, very nice features in there:

common args/functions for each command, provided by the PS library

directory-like listings for lots of things (though apparently manipulating the registry through PS is sub-optimal); feels Unix/Plan 9-like

$error contains all the errors in your interactive cycle

"programming with hand grenades": because just 'bout everything in PS is an object, you can pass that along through a pipe and the receiving command explodes it and tries to do the right thing.

My notes are kind of scattered: I was trying to install version 3 (hey MS: please make this easier), and then I got distracted by something I had to do for work. But I also got to talk to Steve Murawski, the instructor, during the afternoon break, as we were both on the LOPSA booth. I think MS managed to derive a lot of advantage from being the last to show up at the party.

Interestingly, during the course I saw on Twitter that Samba 4 has finally been released. My jaw dropped. It looks like there are still some missing bits, but it can be an AD now. [Keanu voice] Whoah.

During the break I helped staff the LOPSA booth and hung out with a syadmin from NASA; one of her users is a scientist who gets data from the ChemCam (I think) on Curiosity. WAH.

The afternoon's course was on Ganeti, given by Tom Limoncelli and Guido Trotter. THAT is my project for next year: migrating my VMs, currently on one host, to Ganeti. It seems very, very cool. And on top of that, you can test it out in VirtualBox. I won't put in all my notes, since I'm writing this in a hurry (I always fall behind as the week goes on) and a lot of it is avail on the documentaion. But:

You avoid needing a SAN by letting it do DRBD on different pairs of nodes. Need to migrate a machine? Ganeti will pass it over to the other pair.

If you've got a pair of machines (which is about my scale), you've just gained failover of your VMs. If you've got more machines, you can declare a machine down (memory starts crapping out, PS failing, etc) and migrate the machines over to their alternate. When the machine's back up, Ganeti will do the necessary to get the machine back in the cluster (sync DRBDs, etc).

You can import already-existing VMs (Tom: "Thank God for summer interns.")

There's a master, but there are master candidates ready to take over if requested or if the master becomes unavailable.

There's a web manager to let users self-provision. There's also Synnefo, a AWS-like web FE that's commercialized as Okeanos.io (free trial: 3-hour lifetime VMs)

I talked with Scott afterward, and learned something I didn't know: NFS over GigE works fine for VM images. Turn on hard mounts (you want to know when something goes wrong), use TCP, use big block sizes, but it works just fine. This changes everything.

In the evening the bar was full and the hotel restaurant was definitely outside my per diem, so I took a cab downtown to the Tipsy Crow. Good food, nice beer, and great people watching. (Top tip for Canadians: here, the hipsters wear moustaches even when it's not Movember. Prepare now and get ahead of the curve.) Then back to the hotel for the BoFs. I missed Matt's on small infrastructure (damn) but did make the amateur astronomy BoF, which was quite cool. I ran into John Hewson, my roommate from the Baltimore LISA, and found out he's presenting tomorrow; I'll be there for that.

Q: How do you know you're with a Scary Viking Sysadmin?

A: Prefaces new cool thing he's about to show you with "So I learned about this at the last sysadmin Althing...."

-

Handshake Drugs

And if I ever was myself, I wasn't that night... "Handshake Drugs", WilcoWednesday was opening day: the stats (1000+ attendees) and the awards (the Powershell devs got one for "bringing the power of automated system administration to Windows, where it previously largely unsupported"). Then the keynote from Vint Cerf, co-designer of TCP and yeah. He went over a lot of things, but made it clear he was asking questions, not proposing answers. Many cool quotes, including: "TCP/IP runs over everything, including you if you're not paying attention." Discussed the recent ITU talks a lot, and what exactly he's worried about there. Grab the audio/watch the video.

Next talk was about a giant scan of the entire Internet (/0) for SIP servers. Partway through my phone rang and I had to take it, but by the time I got out to the hall it'd stopped and it turned out to be a wrong number anyway. Grr.

IPv6 numbering strategies was next. "How many hosts can you fit in a /48? ALL OF THEM." Align your netblocks by nibble boundaries (hex numbers); it makes visual recognition of demarcation so much easier. Don't worry about packing addresses, because there's lots of room and why complicate things? You don't want to be doing bitwise math in the middle of the night.

Lunch, and the vendor tent. But first an eye-wateringly expensive burrito -- tasty, but $9. It was NOT a $9-sized burrito. I talked to the CloudStack folks and the Ceph folks, and got cool stuff from each. Both look very cool, and I'm going to have to look into them more when I get home. Boxer shorts from the Zenoss folks ("We figured everyone had enough t-shirts").

I got to buttonhole Mark Burgess, tell him how much I'm grateful for what he's done but OMG would he please do something about the mess of brackets. Like the Wordpress sketch:

commands: !wordpress_tarball_is_present:: "/usr/bin/wget -q -O $($(params)[_tarfile]) $($(params)[_downloadurl])" comment => "Downloading latest version of WordPress.";His response, as previously, was "Don't do that, then." To be fair, I didn't have this example and was trying to describe it verbally ("You know, dollar bracket dollar bracket variable square bracket...C'mon, I tweeted about it in January!"). And he agreed yes, it's a problem, but it's in the language now, and indirection is a problem no matter what. All of which is true, and I realize it's easy for me to propose work for other people without coming up with patches. And I let him know that this was a minor nit, that I really was grateful for Cf3. So there.

I got to ask Dru Lavigne about FreeBSD's support for ZFS (same as Illumos) and her opinion of DragonflyBSD (neat, thinks of it as meant for big data rather than desktops, "but maybe I'm just old and crotchety").

I Talked with a PhD student who was there to present a paper. He said it was an accident he'd done this; he's not a sysadmin, and though his nominal field is CS, he's much more interested in improving the teaching of undergraduate students. ("The joke is that primary/secondary school teachers know all about teaching and not so much about the subject matter, and at university it's the other way around."). In CompSci it's all about the conferences -- that's where/how you present new work, not journals (Science, Nature) like the natural sciences. What's more, the prestigious conferences are the theoretical ones run by the ACM and the IEEE, not a practical/professional one like LISA. "My colleagues think I'm slumming."

Off to the talks! First one was a practice and experience report on the config and management of a crapton (700) iPads for students at an Australian university. The iPads belonged to the students -- so whatever profile was set up had to be removable when the course was over, and locking down permanently was not an option.

No suitable tools for them -- so they wrote their own. ("That's the way it is in education.") Started with Django, which the presenter said should be part of any sysadmin's toolset; easy to use, management interface for free. They configured one iPad, copied the configuration off, de-specified it with some judicious search and replace, and then prepared it for templating in Django. To install it on the iPad, the students would connect to an open wireless network, auth to the web app (which was connected to the university LDAP), and the iPad would prompt them to install the profile.

The open network was chosen because the secure network would require a password....which the iPad didn't have yet. And the settings file required an open password in it for the secure wireless to work. The reviewers commented on this a lot, but it was a conscious decision: setting up the iPad was one of ten tasks done on their second day, and a relatively technical one. And these were foreign students, so language comprehension was a problem. In the end, they felt it was a reasonable risk.

John Hewson was up next, talking about ConfSolve, his declarative configuration language connected to/written with a constraint solver. ("Just cross this red wire with this blue wire...") John was my roommate at the Baltimore LISA, and it was neat to see what he's been working on. Basically, you can say things like "I want this VM to have 500 GB of disk" and ConfSolve will be all like, "Fuck you, you only have 200 GB of storage left". You can also express hard limits and soft preferences ("Maximize memory use. It'd be great if you could minimise disk space as well, but just do your best"). This lets you do things like cloudbursting: "Please keep my VMs here unless things start to suck, in which case move my web, MySQL and DNS to AWS and leave behind my SMTP/IMAP."

After his presentation I went off to grab lunch, then back to the LISA game show. It was surprisingly fun and funny. And then, Matt and I went to the San Diego Maritime Museum, which was incredibly awesome. We walked through The Star of India, a huge three-masted cargo ship that still goes out and sails. There were actors there doing Living History (you could hear the caps) with kids, and displays/dioramas to look at. And then we met one of the actors who told us about the ship, the friggin' ENORMOUS sails that make it go (no motor), and about being the Master at Arms in the movie "Master and Commander". Which was our cue to head over to the HMS Surprise, used in the filming thereof. It's a replica, but accurate and really, really neat to see. Not nearly as big as the Star of India, and so many ropes...so very, very many ropes. And after that we went to a Soviet (!) Foxtrot-class submarine, where we had to climb through four circular hatches, each about a metre in diameter. You know how they say life in a submarine is claustrophobic? Yeah, they're not kidding. Amazing, and I can't recommend it enough.

We walked back to the hotel, got some food and beer, and headed off to the LOPSA annual meeting. I did not win a prize. Talked with Peter from the University of Alberta about the lightning talk I promised to do the next day about reproducible science. And thence to bed.

Q: How do you know you're with a Scary Viking Sysadmin?

A: When describing multiple roles at the office, says "My other hat is made of handforged steel."

-

Christmas With Jesus

And my conscience has it stripped down to science Why does everything displease me? Still, I'm trying... "Christmas with Jesus", Josh RouseAt 3am my phone went off with a page from $WORK. It was benign, but do you think I could get back to sleep? Could I bollocks. I gave up at 5am and came down to the hotel lobby (where the wireless does NOT cost $11/day for 512 Kb/s, or $15 for 3Mb/s) to get some work done and email my family. The music volume was set to 11, and after I heard the covers of "Living Thing" (Beautiful South) and "Stop Me If You Think That You've Heard This One Before" (Marc Ronson; disco) I retreated back to my hotel room to sit on my balcony and watch the airplanes. The airport is right by both the hotel and the downtown, so when you're flying in you get this amazing view of the buildings OH CRAP RIGHT THERE; from my balcony I can hear them coming in but not see them. But I can see the ones that are, I guess, flying to Japan; they go straight up, slowly, and the contrail against the morning twilight looks like rockets ascending to space. Sigh.

Abluted (ablated? hm...) and then down to the conference lounge to stock up on muffins and have conversations. I talked to the guy giving the .EDU workshop ("What we've found is that we didn't need a bachelor's degree in LDAP and iptables"), and with someone else about kids these days ("We had a rich heritage of naming schemes. Do you think they're going to name their desktop after Lord of the Rings?" "Naw, it's all gonna be Twilight and Glee.")

Which brought up another story of network debugging. After an organizational merger, network problems persisted until someone figured out that each network had its own DNS servers that had inconsistent views. To make matters worse, one set was named Kirk and Picard, and the other was named Gandalf and Frodo. Our Hero knew then what to do, and in the post-mortem Root Cause Diagnosis, Executive Summary, wrote "Genre Mismatch." [rimshot]

(6.48 am and the sun is rising right this moment. The earth, she is a beautiful place.)

And but so on to the HPC workshop, which intimidated me. I felt unprepared. I felt too small, too newbieish to be there. And when the guy from fucking Oak Ridge got up and said sheepishly, "I'm probably running one of the smaller clusters here," I cringed. But I needn't have worried. For one, maybe 1/3rd of the people introduced themselves as having small clusters (smallest I heard was 10 nodes, 120 cores), or being newbies, or both. For two, the host/moderator/glorious leader was truly excellent, in the best possible Bill and Ted sense, and made time for everyone's questions. For three, the participants were also generous with time and knowledge, and whether I asked questions or just sat back and listened, I learned so much.

Participants: Oak Ridge, Los Alamos, a lot of universities, and a financial trading firm that does a lot of modelling and some really interesting, regulatory-driven filesystem characteristics: nothing can be deleted for 7 years. So if someone's job blows up and it litters the filesystem with crap, you can't remove the files. Sure, they're only 10-100 MB each, but with a million jobs a day that adds up. You can archive...but if the SEC shows up asking for files, they need to have them within four hours.

The guy from Oak Ridge runs at least one of his clusters diskless: less moving parts to fail. Everything gets saved to Lustre. This became a requirement when, in an earlier cluster, a node failed and it had Very Important Data on a local scratch disk, and it took a long time to recover. The PI (==principal investigator, for those not from an .EDU; prof/faculty member/etc who leads a lab) said, "I want to be able to walk into your server room, fire a shotgun at a random node, and have it back within 20 minutes." So, diskless. (He's also lucky because he gets biweekly maintenance windows. Another admin announces his quarterly outages a year in advance.)

There were a lot of people who ran configuration management (Cf3, Puppet, etc) on their compute nodes, which surprised me. I've thought about doing that, but assumed I'd be stealing precious CPU cycles from the science. Overwhelming response: Meh, they'll never notice. OTOH, using more than one management tool is going to cause admin confusion or state flapping, and you don't want to do that.

One guy said (both about this and the question of what installer to use), "Why are you using anything but Rocks? It's federally funded, so you've already paid for it. It works and it gets you a working cluster quickly. You should use it unless you have a good reason not to." "I think I can address that..." (laughter) Answer: inconsistency with installations; not all RPMs get installed when you're doing 700 nodes at once, so he uses Rocks for a bare-ish install and Cf3 after that -- a lot like I do with Cobbler for servers. And FAI was mentioned too, which apparently has support for CentOS now.

One .EDU admin gloms all his lab's desktops into the cluster, and uses Condor to tie it all together. "If it's idle, it's part of the cluster." No head node, jobs can be submitted from anywhere, and the dev environment matches the run environment. There's a wide mix of hardware,so part of user education a) is getting people to specify minimal CPU and memory requirements and b) letting them know that the ideal job is 2 hours long. (Actually, there were a lot of people who talked about high-turnover jobs like that, which is different from what I expected; I always thought of HPC as letting your cluster go to town for 3 weeks on something. Perhaps that's a function of my lab's work, or having a smaller cluster.)

User education was something that came up over and over again: telling people how to efficiently use the cluster, how to tweak settings (and then vetting jobs with scripts).

I asked about how people learned about HPC; there's not nearly the wealth of resources that there are for programming, sysadmin, networking, etc. Answer: yep, it's pretty quiet out there. Mailing lists tend to be product-specific (though are pretty excellent), vendor training is always good if you can get it, but generally you need to look around a lot. ACM has started a SIG for HPC.

I asked about checkpointing, which was something I've been very fuzzy about. Here's the skinny:

Checkpointing is freezing the process so that you can resurrect it later. It protects against node failures (maybe with automatic moving of the process/job to another node if one goes down) and outages (maybe caused by maintenance windows.)

Checkpointing can be done at a few different layers:

- the app itself

- the scheduler (Condor can do this; Torque can't)

- the OS (BLCR for Linux, but see below)

- or just suspending a VM and moving it around; I was unclear how ``` many people did this.

* The easiest and best by far is for the app to do it. It knows its state intimately and is in the best position to do this. However, the app needs to support this. Not necessary to have it explicitly save the process (as in, kernel-resident memory image, registers, etc); if it can look at logs or something and say "Oh, I'm 3/4 done", then that's good too. * The Condor scheduler supports this, *but* you have to do this by linking in its special libraries when you compile your program. And none of the big vendors do this (Matlab, Mathematica, etc). * BLCR: "It's 90% working, but the 10% will kill you." Segfaults, restarts only work 2/3 of the time, etc. Open-source project from a federal lab and until very recently not funded -- so the response to "There's this bug..." was "Yeah, we're not funded. Can't do nothing for you." Funding has been obtained recently, so keep your fingers crossed. One admin had problems with his nodes: random slowdowns, not caused by cstates or the other usual suspects. It's a BIOS problem of some sort and they're working it out with the vendor, but in the meantime the only way around it is to pull the affected node and let the power drain completely. This was pointed out by a user ("Hey, why is my job suddenly taking so long?") who was clever enough to write a dirt-simple 10 million iteration for-loop that very, very obviously took a lot longer on the affected node than the others. At this point I asked if people were doing regular benchmarking on their clusters to pick up problems like this. Answer: no. They'll do benchmarking on their cluster when it's stood up so they have something to compare it to later, but users will unfailingly tell them if something's slow. I asked about HPL; my impression when setting up the cluster was, yes, benchmark your own stuff, but benchmark HPL too 'cos that's what you do with a cluster. This brought up a host of problems for me, like compiling it and figuring out the best parameters for it. Answers: * Yes, HPL is a bear. Oak Ridge: "We've got someone for that and that's all he does." (Response: "That's your answer for everything at Oak Ridge.") * Fiddle with the params P, Q and N, and leave the rest alone. You can predict the FLOPS you should get on your hardware, and if you get 90% or so within that you're fine. * HPL is not that relevant for most people, and if you tune your cluster for linear algebra (which is what HPL does) you may get crappy performance on your real work. * You can benchmark it if you want (and download Intel's binary if you do; FIXME: add link), but it's probably better and easier to stick to your own apps. Random: * There's a significant number of clusters that expose interactive sessions to users via qlogin; that had not occurred to me. * Recommended tools: * ubmod: accounting graphs * Healthcheck scripts (Werewolf) * stress: cluster stress test tool * munin: to collect arbitrary info from a machine * collectl: good for ie millisecond resolution of traffic spikes * "So if a box gets knocked over -- and this is just anecdotal -- my experience is that the user that logs back in first is the one who caused it." * A lot of the discussion was prompted by questions like "Is anyone else doing X?" or "How many people here are doing Y?" Very helpful. * If you have to return warranty-covered disks to the vendor but you really don't want the data to go, see if they'll accept the metal cover of the disk. You get to keep the spinning rust. * A lot of talk about OOM-killing in the bad old days ("I can't tell you how many times it took out init."). One guy insisted it's a lot better now (3.x series). * "The question of changing schedulers comes up in my group every six months." * "What are you doing for log analysis?" "We log to /dev/null." (laughter) "No, really, we send syslog to /dev/null." * Splunk is eye-wateringly expensive: 1.5 TB data/day =~ $1-2 million annual license. * On how much disk space Oak Ridge has: "It's...I dunno, 12 or 13 PB? It's 33 tons of disks, that's what I remember." * Cheap and cheerful NFS: OpenSolaris or FreeBSD running ZFS. For extra points, use an Aztec Zeus for a ZIL: a battery-backed 8GB DIMM that dumps to a compact flash card if the power goes out. * Some people monitor not just for overutilization, but for underutilization: it's a chance for user education ("You're paying for my time and the hardware; let me help you get the best value for that"). For Oak Ridge, though, there's less pressure for that: scientists get billed no matter what. * "We used to blame the network when there were problems. Now their app relies on SQL Server and we blame that." * Sweeping for expired data is important. If it's scratch, then *treat* it as such: negotiate expiry dates and sweep regularly. * Celebrity resemblances: Michael Moore and the guy from Dead Poet's Society/The Good Wife. (Those are two different sysadmins, btw.) * Asked about my .TK file problem; no insight. Take it to the lists. (Don't think I've written about this, and I should.) * On why one lab couldn't get Vendor X to supply DKMS kernel modules for their hardware: "We're three orders of magnitude away from their biggest customer. We have *no* influence." * Another vote for SoftwareCarpentry.org as a way to get people up to speed on Linux. * A lot of people encountered problems upgrading to Torque 4.x and rolled back to 2.5. "The source code is disgusting. Have you ever looked at it? There's 15 years of cruft in there. The devs acknowledged the problem and announced they were going to be taking steps to fix things. One step: they're migrating to C++. [Kif sigh]" * "Has anyone here used Moab Web Services? It's as scary as it sounds. Tomcat...yeah, I'll stop there." "You've turned the web into RPC. Again." * "We don't have regulatory issues, but we do have a physicist/geologist issue." * 1/3 of the Top 500 use SLURM as a scheduler. Slurm's srun =~ Torque's pdbsh; I have the impression it does not use MPI (well, okay, neither does Torque, but a lot of people use Torque + mpirun), but I really need to do more reading. * lmod (FIXME: add link) is a Environment Modules-compatible (works with old module files) replacement that fixes some problems with old EM, actively developed, written in lua. * People have had lots of bad experiences with external Fermi GPU boxes from Dell, particularly when attached to non-Dell equipment. * Puppet has git hooks that let you pull out a particular branch on a node. And finally: Q: How do you know you're with a Scary Viking Sysadmin? A: They ask for Thor's Skullsplitter Mead at the Google Bof. -

Hotel Arizona

Hotel in Arizona made us all wanna feel like stars... "Hotel Arizona", WilcoSunday morning I was down in the lobby at 7.15am, drinking coffee purchased with my $5 gift certificate from the hotel for passing up housekeeping ("Sheraton Hotels Green Initiative"). I registered for the conference, came back to my hotel room to write some more, then back downstairs to wait for my tutorial on Amazon Web Services from Bill LeFebvre (former LISA chair and author of top(1)) and Marc Chianti. It was pretty damned awesome: an all-day course that introduced us to AWS and the many, many services they offer. For reasons that vary from budgeting to legal we're unlikely to move anything to AWS at $WORK, but it was very, very enlightening to learn more about it. Like:

Amazon lights up four new racks a day, just keeping up with increased demand.

Their RDS service (DB inna box) will set up replication automagically AND apply patches during configurable regular downtime. WAH.

vmstat(1) will, for a VM, show CPU cycles stolen by/for other VMs in the ST column

Amazon will not really guarantee CPU specs, which makes sense (you're on guest on a host of 20 VMs, many hardware generations, etc). One customer they know will spin up a new instance and immediately benchmark it to see if performance is acceptable; if not, they'll destroy it and try again.

Netflix, one of AWS' biggest customers, does not use EBS (persistent) storage for its instances. If there's an EBS problem -- and this probably happens a few times a year -- they keep trucking.

It's quite hard to "burst into the cloud" -- to use your own data centre most of the time, then move stuff to AWS at Xmas, when you're Slashdotted, etc. The problem is: where's your load balancer? And how do you make that available no matter what?

One question I asked: How would you scale up an email service? 'Cos for that, you don't only need CPU power, but (say) expanded disk space, and that shared across instances. A: Either do something like GlusterFS on instances to share FS, or just stick everything in RDS (AWS' MySWL service) and let them take care of it.

The instructors know their stuff and taught it well. If you have the chance, I highly recommend it.

Lunch/Breaks:

Met someone from Mozilla who told me that they'd just decommissioned the last of their community mirrors in favour of CDNs -- less downtime. They're using AWS for a new set of sites they need in Brazil, rather than opening up a new data centre or some such.

Met someone from a flash sale site: they do sales every day at noon, when they'll get a million visitors in an hour, and then it's quiet for the next 23 hours. They don't use AWS -- they've got enough capacity in their data centre for this, and they recently dropped another cloud provider (not AWS) because they couldn't get the raw/root/hypervisor-level performance metrics they wanted.

Saw members of (I think) this show choir wearing spangly skirts and carrying two duffel bags over each shoulder, getting ready to head into one of the ballrooms for a performance at a charity lunch.

Met a sysadmin from a US government/educational lab, talking about fun new legal constraints: to keep running the lab, the gov't required not a university but a LLC. For SLAC, that required a new entity called SLAC National Lab, because Stanford was already trademarked and you can't delegate a trademark like you can DNS zones. And, it turns out, we're not the only .edu getting fuck-off prices from Oracle. No surprise, but still reassuring.

I saw Matt get tapped on the shoulder by one of the LISA organizers and taken aside. When he came back to the table he was wearing a rubber Nixon mask and carrying a large clanking duffel bag. I asked him what was happening and he said to shut up. I cried, and he slapped me, then told me he loved me, that it was just one last job and it would make everything right. (In the spirit of logrolling, here he is scoping out bank guards:

Where does the close bracket go?)

After that, I ran into my roommate from the Baltimore LISA in 2009 (check my tshirt...yep, 2009). Very good to see him. Then someone pointed out that I could get free toothpaste at the concierge desk, and I was all like, free toothpaste?

And then who should come in but Andy Seely, Tampa Bay homeboy and LISA Organizing Committee member. We went out for beer and supper at Karl Strauss (tl;dr: AWESOME stout). Discussed fatherhood, the ageing process, free-range parenting in a hanger full of B-52s, and just how beer is made. He got the hang of it eventually:

I bought beer for my wife, he took a picture of me to show his wife, and he shared his toothpaste by putting it on a microbrewery coaster so I didn't have to pay $7 for a tube at the hotel store, 'cos the concierge was out of toothpaste. It's not a euphemism.

Q: How do you know you're with a Scary Viking Sysadmin?

A: They insist on hard drive destruction via longboat funeral pyre.

-

(nothinsevergonnagetinmyway) Again

Wasted days, wasted nights Try to downplay being uptight... -- "(nothinsevergonnastandinmyway) Again", WilcoSaturday I headed out the door at 5.30am -- just like I was going into work early. I'd been up late the night before finishing up "Zone One" by Colson Whitehead, which ZOMG is incredible and you should read, but I did not want to read while alone and feeling discombobulated in a hotel room far from home. Cab to the airport, and I was suprised to find I didn't even have to opt out; the L3 scanners were only being used irregularly. I noticed the hospital curtains set up for the private screening area; it looked a bit like God's own shower curtain.

The customs guard asked me where I was going, and whether I liked my job. "That's important, you know?" Young, a shaved head and a friendly manner. Confidential look left, right, then back at me. "My last job? I knew when it was time to leave that one. You have a good trip."

The gate for the airline I took was way out on a side wing of the airport, which I can only assume meant that airline lost a coin toss or something. The flight to Seattle was quick and low, so it wasn't until the flight to San Diego that a) we climbed up to our cruising altitude of $(echo "39000/3.3" | bc) 11818 meters and b) my ears started to hurt. I've got a cold and thought that my aggressive taking of cold medication would help, but no. The first seatmate had a shaved head, a Howie Mandel soul patch, a Toki watch and read "Road and Track" magazine, staring at the ads for mag wheels; the other seatmate announced that he was in the Navy, going to his last command, and was going to use the seat tray as a headrest as soon as they got to cruising. "I was up late last night, you know?" I ate my Ranch Corn Nuggets (seriously).

Once at the hotel, I ran into Bob the Norwegian, who berated me for being surprised that he was there. "I've TOLD you this over and over again!" Not only that, but he was there with three fellow Norwegian sysadmins, including his minion. I immediately started composing Scary Viking Sysadmin questions in my head; you may begin to look forward to them.

We went out to the Gaslamp district of San Diego, which reminds me a lot of Gastown in Vancouver; very familiar feel, and a similar arc to its history. Alf the Norwegian wanted a hat for cosplay, so we hit two -- TWO -- hat stores. The second resembled nothing so much as a souvenir shop in a tourist town, but the first was staffed by two hipsters looking like they'd stepped straight out of Instagram:

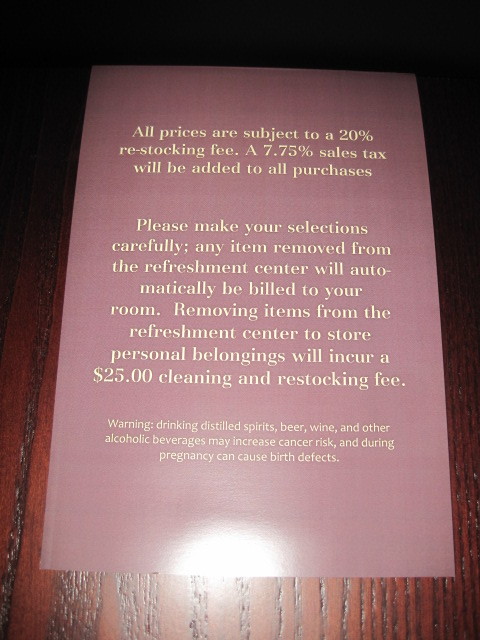

They sold $160 Panama hats. I very carefully stayed away from the merchandise. Oh -- and this is unrelated -- from the minibar in my hotel room:

We had dinner at a restaurant whose name I forget; stylish kind of place, with ten staff members (four of whom announced, separately, that they would be our server for the night). They seemed disappointed when I ordered a Wipeout IPA ("Yeah, we're really known more for our Sangria"), but Bob made up for it by ordering a Hawaiian Hoo-Hoo:

We watched the bar crawlers getting out of cabs dressed in Sexy Santa costumes ("The 12 Bars of Xmas Pub Crawl 2012") and discussed Agile Programming (which phrase, when embedded in a long string of Norwegian, sounds a lot like "Anger Management".)

Q: How do you know you're with a Scary Viking Sysadmin?

A: They explain the difference between a fjord and a fjell in terms of IPv6 connectivity.

There was also this truck in the streets, showing the good folks of San Diego just what they were missing by not being at home watching Fox Sports:

We headed back to the hotel, and Bob and I waited for Matt to show up. Eventually he did, with Ben Cotton in tow (never met him before -- nice guy, gives Matt as much crap as I do -> GOOD) and Matt regaled us with tales of his hotel room:

Matt: So -- I don't wanna sound special or anything -- but is your room on the 7th floor overlooking the pool and the marina with a great big king-sized bed? 'Cos mine is.

Me: Go on.

Matt: I asked the guy at the desk when I was checking in if I could get a king-size bed instead of a double --

Me: "Hi, I'm Matt Simmons. You may know me from Standalone Hyphen Sysadmin Dot Com?"

Ben: "I'm kind of a big deal on the Internet."

Matt: -- and he says sure, but we're gonna charge you a lot more if you trash it.

Not Matt's balcony:

(UPDATE: Matt read this and said "Actually, I'm on the 9th floor? Not the 7th." saintaardvarkthecarpeted.com regrets the error.)

I tweeted from the bar using my laptop ("It's an old AOLPhone prototype"). It was all good.

-

Tampa Bay Breakfasts

My friend Andy, who blogs at Tampa Bay Breakfasts, got an article written about him here. Like his blog, it's good reading. You should read both.

He's also a sysadmin who's on the LISA organizing committee this year, and I'm going to be seeing him in a few days when I head down to San Diego. The weather is looking shockingly good for this Rain City inhabitant. I'm looking forward to it. Now I just have to pick out my theme band for this year's conference....I'm thinking maybe Josh Rouse.

-

Things out from under my desk FTMFW

Yesterday I finally moved the $WORK mail server (well, services) from a workstation under my desk to a proper VM and all. Mailman, Postfix, Dovecot -- all went. Not only that, but I've got them running under SELinux no less. Woot!

Next step was to update all the documentation, or at least most of it, that referred to the old location. In the process I came across something I'd written in advance of the last time I went to LISA: "My workstation is not important. It does no services. I mention this so that no one will panic if it goes down."

Whoops: not true! While migrating to Cfengine 3, I'd set up the Cf3 master server on my workstation. After all, it was only for testing, right? Heh. We all know how that goes. So I finally bit the bullet and moved it over to a now-even-more-important VM (no, not the mail server) and put the policy files under /masterfiles so that bootstrapping works. Now we're back to my workstation only holding my stuff. Hurrah!

And did I mention that I'm going to LISA? True story. Sunday I'm doing Amazon Web Services training; Monday I'm in the HPC workshop; Tuesday I'm doing Powershell Fundamentals (time to see how the other half lives, and anyway I've heard good things about Powershell) and Ganeti (wanted to learn about that for a while). As for the talks: I'm not as overwhelmed this year, but the Vint Cerf speech oughta be good, and anyhow I'm sure there will be lots I can figure out on the day.

Completely non-techrelated link of the day: "On Drawing". This woman is an amazing writer.

-

Hadoop and samtools

I've been asked to revisit Hadoop at $WORK. About a year ago I got a small cluster (3 nodes) working and was plugging away at Myrna...but then our need for Myrna disappeared, and Hadoop was left fallow. The need this time around seems more permanent.

So far I'm trying to get a simple streaming job working. The initial script is pretty simple:

samtools view input.bam| cut -f 3 | uniq -c | sed 's/^[\t]*//' | sort -k1,1nr > output.txtThis breaks down to:

- mapper.sh: samtools view

- reducer.sh: "cut -f 3 | uniq -c | sed ... | sort ..."

which, invoked Hadoop-style, should be: ``` hstream -input input.bam \ -file mapper.sh -mapper "mapper.sh" \ -file reducer.sh -reducer "reducer.sh" \ -output output.txt

Running the mapper.sh/reducer.sh files works fine; the problem is that under Hadoop, it fails:2012-11-06 12:07:30,106 INFO org.apache.hadoop.streaming.PipeMapRed: R/W/S=1000/0/0 in:NA [rec/s] out:NA [rec/s] 2012-11-06 12:07:30,110 INFO org.apache.hadoop.streaming.PipeMapRed: MRErrorThread done 2012-11-06 12:07:30,111 WARN org.apache.hadoop.streaming.PipeMapRed: java.io.IOException: Broken pipe at java.io.FileOutputStream.writeBytes(Native Method) at java.io.FileOutputStream.write(FileOutputStream.java:260) at java.io.BufferedOutputStream.write(BufferedOutputStream.java:105)

I'm unsure right now if that's [this error][3] or something else I've done wrong. Oh well, it'll be fun to turn on debugging and see what's going on under the hood... ...unless, of course, unless I'm wasting my time. A quick search turned up a number of Hadoop-based bioinformatics tools ([Biodoop][4], [Seqpiq][5] and [Hadoop-Bam][6]), and I'm sure there are a crapton more. Other chores: * Duplicating pythonbrew/modules work on another server since our cluster is busy * Migrating our mail server to a VM * Setting up printing accounting with Pykota (latest challenge: dealing wth usernames that aren't in our LDAP tree) * Accumulated paperwork * Renewing lapsed support on a Very Important Server Oh well, at least I'm registered for [LISA][7]. Woohoo! -

Mark Burgess' talk at LISA 11

I've bene catching up on the talks at LISA last year, and one of them was Mark Burgess' talk "3 Myths and 3 Challenges to Bring System Administration out of the Dark Ages". (Anyone else reminded of "7 things about lawyers the occult can't explain?") If I was there, I'd've made this comment; as it is, I'll leave it here.

One of this points was that in this brave new world, we need to let go of serialism ("A follows B follows C, and that's Just The Way It Is(tm)"). That's the old way of thinking, he said, the Industrial way; we can do much more in parallel than we ever could in serial.

It occurs to me that it might be better to say that needless serialism can be let go of. Like a Makefile: the final executable depends on all the object files; without them, there's no sense trying to create it. But the object files typically depend on a file or two each (a .c and .h file, say), and there's no reason they can't be compiled in parallel ("make -j9"). Dependencies are there for a reason, and it is no bad thing to hold on to them.

(Kinda like the misquoting of Emerson. Often, you hear "Consistency is the hobgoblin of little minds." But the quote actually begins "A foolish consistency..." And now, having demonstrated my superiority by quoting Wikipedia, I will now disappear up my own ass.)

-

Can't Face Up

How many times have I tried Just to get away from you, and you reel me back? How many times have I lied That there's nothing that I can do?-- SloanFriday morning started with a quick look at Telemundo ("PRoxima: Esclavas del sexo!"), then a walk to Phillz coffee. This time I got the Tesora blend (their hallmark) and wow, that's good coffee. Passed a woman woman pulling two tiny dogs across the street: "C'mon, peeps!" Back at the tables I checked my email and got an amazing bit of spam about puppies, and how I could buy some rare breeds for ch33p.

First up was the Dreamworks talk. But before that, I have to relate something.

Earlier in the week I ran into Sean Kamath, who was giving the talk, and told him it looked interesting and that I'd be sure to be there. "Hah," he said, "Wanna bet? Tom Limoncelli's talk is opposite mine, and EVERYONE goes to a Tom Limoncelli talk. There's gonna be no one at mine."

Then yesterday I happened to be sitting next to Tom during a break, and he was discussing attendance at the different presentations. "Mine's tomorrow, and no one's going to be there." "Why not?" "Mine's opposite the Dreamworks talk, and EVERYONE goes to Dreamworks talks."

Both were quite amused -- and possibly a little relieved -- to learn what the other thought.

But back at the ranch: FIXME in 2008, Sean gave a talk on Dreamworks and someone asked afterward "So why do you use NFS anyway?" This talk was meant to answer that.

So, why? Two reasons:

- Because it works.

They use lots of local caching (their filers come from NetApp, and they also have a caching box), a global namespace, data hierarchy (varying on the scales of fast, reliable and expensive), leverage the automounter to the max, and 10GB core links everywhere, and it works.

- What else are you gonna use? Hm?

FTP/rcp/rdist? Nope. SSH? Won't handle the load. AFS lacks commercial support -- and it's hard to get the head of a billion-dollar business to buy into anything without commercial support.

They cache for two reasons: global availability and scalability. First, people in different locations -- like on different sides of the planet (oh, what an age we live in!) -- need access to the same files. (Most data has location affinity, but this will not necessarily be true in the future.) Geographical distribution and the speed of light do cause some problems: while Data reads and gettatr() are helped a lot by the caches, first open, sync()s and writes are slow when the file is in India and it's being opened in Redwood. They're thinking about improvements to the UI to indicate what's happening to reduce user frustration. But overall, it works and works well.

Scalability is just as important: thousands of machines hitting the same filter will melt it, and the way scenes are rendered, you will have just that situation. Yes, it adds latency, but it's still faster than an overloaded filer. (It also requires awareness of close-to-open consistency.)

Automounter abuse is rampant at DW; If one filer is overloaded, they move some data somewhere else and change the automount maps. (They're grateful for the automounter version in RHEL 5: it no longer requires that the node be rebooted to reload the maps.) But like everything else it requires a good plan, or it gets confusing quickly.

Oh, and quick bit of trivia: they're currently sourcing workstations with 96GB of RAM.

One thing he talked about was that there are two ways to do sysadmin: rule-enforcing and policy-driven ("No!") or creative, flexible approaches to helping people get their work done. The first is boring; the second is exciting. But it does require careful attention to customers' needs.

So for example: the latest film DW released was "Mastermind". This project was given a quota of 85 TB of storage; they finished the project with 75 TB in use. Great! But that doesn't account for 35 TB of global temp space that they used.

When global temp space was first brought up, the admins said, "So let me be clear: this is non-critical and non-backed up. Is that okay with you?" "Oh sure, great, fine." So the admins bought cheap-and-cheerful SATA storage: not fast, not reliable, but man it's cheap.

Only it turns out that non-backed up != non-critical. See, the artists discovered that this space was incredibly handy during rendering of crowds. And since space was only needed overnight, say, the space used could balloon up and down without causing any long-term problems. The admins discovered this when the storage went down for some reason, and the artists began to cry -- a day or two of production was lost because the storage had become important to one side without the other realizing it.

So the admins fixed things and moved on, because the artists need to get things done. That's why he's there. And if he does his job well, the artists can do wonderful things. He described watching "Madegascar", and seeing the crowd scenes -- the ones the admins and artists had sweated over. And they were good. But the rendering of the water in other scenes was amazing -- it blew him away, it was so realistic. And the artists had never even mentioned that; they'd just made magic.

Understand that your users are going to use your infrastructure in ways you never thought possible; what matters is what gets put on the screen.

Challenges remain:

Sometimes data really does need to be at another site, and caching doesn't always prevent problems. And problems in a data render farm (which is using all this data) tend to break everything else.

Much needs to be automated: provisioning, re-provisioning and allocating storage is mostly done by hand.

Disk utilization is hard to get in real time with > 4 PB of storage world wide; it can take 12 hours to get a report on usage by department on 75 TB, and that doesn't make the project managers happy. Maybe you need a team for that...or maybe you're too busy recovering from knocking over the filer by walking 75 TB of data to get usage by department.

Notifications need to be improved. He'd love to go from "Hey, a render farm just fell over!" to "Hey, a render farm's about to fall over!"

They still need configuration management. They have a homegrown one that's working so far. QOTD: "You can't believe how far you can get with duct tape and baling wire and twine and epoxy and post-it notes and Lego and...we've abused the crap out of free tools."

I went up afterwards and congratulated him on a good talk; his passion really came through, and it was amazing to me that a place as big as DW uses the same tools I do, even if it is on a much larger scale.

I highly recommend watching his talk (FIXME: slides only for now. Do it now; I'll be here when you get back.

During the break I got to meet Ben Rockwood at last. I've followed his blog for a long time, and it was a pleasure to talk with him. We chatted about Ruby on Rails, Twitter starting out on Joyent, upcoming changes in Illumos now that they've got everyone from Sun but Jonathan Schwarz (no details except to expect awesome and a renewed focus on servers, not desktops), the joke that Joyent should just come out with it and call itself "Sun". Oh, and Joyent has an office in Vancouver. Ben, next time you're up drop me a line!

Next up: Twitter. 165 million users, 90 million tweets per day, 1000 tweets per second....unless the Lakers win, in which case it peaks at 3085 tweets per second. (They really do get TPS reports.) 75% of those are by API -- not the website. And that percentage is increasing.

Lessons learned:

Nothing works the first time; scale using the best available tech and plan to build everything more than once.

(Cron + ntp) x many machines == enough load on, say, the central syslog collector to cause micro outages across the site. (Oh, and speaking of logging: don't forget that syslog truncates messages > MTU of packet.)

RRDtool isn't good for them, because by the time you want to fiugure out what that one minute outage was about two weeks ago, RRDtool has averaged away the data. (At this point Toby Oetiker, a few seats down from me, said something I didn't catch. Dang.)

Ops mantra: find the weakest link; fix; repeat. OPS stats: MTTD (mean time to detect problem) and MTTR (MT to recover from problem).

It may be more important to fix the problem and get things going again than to have a post-mortem right away.

At this scale, at this time, system administration turns into a large programming project (because all your info is in your config. mgt tool, correct?). They use Puppet + hundreds of Puppet modules + SVN + post-commit hooks to ensure code reviews.

Occasionally someone will make a local change, then change permissions so that Puppet won't change it. This has led to a sysadmin mantra at Twitter: "You can't chattr +i with broken fingers."

Curve fitting and other basic statistical tools can really help -- they were able to predict the Twitpocalypse (first tweet ID > 2^32) to within a few hours.

Decomposition is important to resiliency. Take your app and break it into n different independant, non-interlocked services. Put each of them on a farm of 20 machines, and now you no longer care if a machine that does X fails; it's not the machine that does X.

Because of this Nagios was not a good fit for them; they don't want to be alerted about every single problem, they want to know when 20% of the machines that do X are down.

Config management + LDAP for users and machines at an early, early stage made a huge difference in ease of management. But this was a big culture change, and management support was important.

And then...lunch with Victor and his sister. We found Good Karma, which had really, really good vegan food. I'm definitely a meatatarian, but this was very tasty stuff. And they've got good beer on tap; I finally got to try Pliny the Elder, and now I know why everyone tries to clone it.

Victor talked about one of the good things about config mgt for him: yes, he's got a smaller number of machines, but when he wants to set up a new VM to test something or other, he can get that many more tests done because he's not setting up the machine by hand each time. I hadn't thought of this advantage before.

After that came the Facebook talk. I paid a little less attention to this, because it was the third ZOMG-they're-big talk I'd been to today. But there were some interesting bits:

Everyone talks about avoiding hardware as a single point of failure, but software is a single point of failure too. Don't compound things by pushing errors upstream.

During the question period I asked them if it would be totally crazy to try different versions of software -- something like the security papers I've seen that push web pages through two different VMs to see if any differences emerge (though I didn't put it nearly so well). Answer: we push lots of small changes all the time for other reasons (problems emerge quickly, so easier to track down), so in a way we do that already (because of staged pushes).

Because we've decided to move fast, it's inevitable that problems will emerge. But you need to learn from those problems. The Facebook outage was an example of that.

Always do a post-mortem when problems emerge, and if you focus on learning rather than blame you'll get a lot more information, engagement and good work out of everyone. (And maybe the lesson will be that no one was clearly designated as responsible for X, and that needs to happen now.)

The final speech of the conference was David Blank-Edelman's keynote on the resemblance between superheroes and sysadmins. I watched for a while and then left. I think I can probably skip closing keynotes in the future.

And then....that was it. I said goodbye to Bob the Norwegian and Claudio, then I went back to my room and rested. I should have slept but I didn't; too bad, 'cos I was exhausted. After a while I went out and wandered around San Jose for an hour to see what I could see. There was the hipster cocktail bar called "Cantini's" or something; billiards, flood pants, cocktails, and the sign on the door saying "No tags -- no colours -- this is a NEUTRAL ZONE."

I didn't go there; I went to a generic looking restaurant with room at the bar. I got a beer and a burger, and went back to the hotel.

-

Anyone who's anyone

I missed my chance, but I think I'm gonna get another... -- SloanThursday morning brought Brendan Gregg's (nee Sun, then Oracle, and now Joyent) talk about data visualization. He introduced himself as the shouting guy, and talked about how heat maps allowed him so see what the video demonstrated in a much more intuitive way. But in turn, these require accurate measurement and quantification of performance: not just "I/O sucks" but "the whole op takes 10 ms, 1 of which is CPU and 9 of which is latency."

Some assumptions to avoid when dealing with metrics:

The available metrics are correctly implemented. Are you sure there's not a kernel bug in how something is measured? He's come across them.

The available metrics are designed by performance experts. Mostly, they're kernel developers who were trying to debug their work, and found that their tool shipped.

The available metrics are complete. Unless you're using DTrace, you simply won't always find what you're looking for.

He's not a big fan of using IOPS to measure performance. There are a lot of questions when you start talking about IOPS. Like what layer?

- app

- library

- sync call

- VFS

- filesystem

- RAID

- device

(He didn't add political and financial, but I think that would have been funny.)

Once you've got a number, what's good or bad? The number can change radically depending on things like library/filesystem prefetching or readahead (IOPS inflation), read caching or write cancellation (deflation), the size of a read (he had an example demonstrating how measured capacity/busy-ness changes depending on the size of reads)...probably your company's stock price, too. And iostat or your local equivalent averages things, which means you lose outliers...and those outliers are what slow you down.

IOPS and bandwidth are good for capacity planning, but latency is a much better measure of performance.

And what's the best way of measuring latency? That's right, heatmaps. Coming from someone who worked on Fishworks, that's not surprising, but he made a good case. It was interesting to see how it's as much art as science...and given that he's exploiting the visual cortex to make things clear that never were, that's true in a few different ways.

This part of the presentation was so visual that it's best for you to go view the recording (and anyway, my notes from that part suck).

During the break, I talked with someone who had worked at Nortel before it imploded. Sign that things were going wrong: new execs come in (RUMs: Redundant Unisys Managers) and alla sudden everyone is on the chargeback model. Networks charges ops for bandwidth; ops charges networks for storage and monitoring; both are charged by backups for backups, and in turn are charged by them for bandwidth and storage and monitoring.

The guy I was talking to figured out a way around this, though. Backups had a penalty clause for non-performance that no one ever took advantage of, but he did: he requested things from backup and proved that the backups were corrupt. It got to the point where the backup department was paying his department every month. What a clusterfuck.

After that, a quick trip to the vendor area to grab stickers for the kids, then back to the presentations.